You’ve probably noticed how your phone unlocks instantly, even in airplane mode. Or how a smartwatch can spot irregular heart rhythms without sending anything to the cloud. These little moments feel effortless, almost quiet in how they work. But behind that silence is a huge shift in how machines think. A few years ago, most of this intelligence required a round trip to a server farm somewhere far away. Now it happens right on the device, inches from your hand.

You’ve probably noticed how your phone unlocks instantly, even in airplane mode. Or how a smartwatch can spot irregular heart rhythms without sending anything to the cloud. These little moments feel effortless, almost quiet in how they work. But behind that silence is a huge shift in how machines think. A few years ago, most of this intelligence required a round trip to a server farm somewhere far away. Now it happens right on the device, inches from your hand.

That’s the real story of Edge-AI on-device inference frameworks, the engine behind that feeling of instant intelligence. And once you start paying attention, you realize this change isn’t some small optimization. It’s a full rewrite of the computing model we’ve been using for decades.

Before we dive deeper, here’s a simple truth: machines are finally learning to think where the data lives. No detours. No waiting. No whispering to the cloud. Just local computation that feels personal, fast, and oddly alive.

Let’s walk through what’s happening how these frameworks work, why they matter, and the quiet ways they’re reshaping the devices we carry every day.

Why Edge AI Feels Like the Next Leap Forward

Most people never see the infrastructure behind AI. The rows of servers. The cooling systems. The routes data takes before it returns to your device. And honestly, they shouldn’t have to. But when that entire pipeline disappears and actions start happening instantly, you notice the difference even if you can’t explain it yet.

Imagine using your phone on a mountain trail, far from any signal, and still getting real-time language translation. Or using a camera app that recognizes scenes without needing to contact a server. These experiences are becoming normal, not because the cloud got closer, but because the device itself became smarter.

That’s the heart of edge inference: models that run next to the sensors that collect the data. Think about how natural that feels. Your eyes don’t send your vision to a satellite and wait for feedback. They process everything locally, in real time. Devices are starting to mimic that same logic.

A friend told me recently about walking into his home office one morning and noticing his smart monitor automatically adjusting brightness before he touched anything. He checked the logs later and realized the model runs entirely on-device. No cloud sync needed. It just watched the ambient light and adapted. That’s the kind of subtle, everyday magic edge frameworks unlock.

And it’s just getting started.

So What Is Edge AI, Really? A Simple Take That Doesn’t Sound Like a Textbook

Picture a tiny brain tucked inside your device not as huge or complex as a data center, but quick and tuned for the things you do every day. It listens to sensors. It learns patterns. It responds right away. That’s edge AI.

In practical terms, edge AI means running AI inference directly on hardware like:

- Smartphones

- Wearables

- Home assistants

- Drones

- Smart cameras

- Industrial sensors

- Microcontrollers (TinyML territory)

Instead of uploading data to cloud servers:

The device itself analyzes, predicts, and decides.

When someone asks, “What is AI inference at the edge?”, this is the clean answer:

It’s the moment when your device stops depending on a faraway server and starts thinking for itself.

And honestly, once you’ve experienced that kind of speed and privacy, everything else feels outdated.

A Quick Coffee Table Example: Why It Clicks for People

You’re making coffee. Your kitchen gets bright for a second as the sun breaks through the morning clouds. A smart thermostat nudges the AC down a bit because the room temperature just tipped upward. No lag. No spinning icon. The decision happens in a heartbeat.

If that model lived in the cloud, three things would go wrong immediately:

- It’d need a stable connection.

- It’d need to send sensor readings out.

- It’d wait for the server to reply.

That’s too slow for small decisions.

And too risky for private ones.

On-device inference solves that entire back-and-forth by deleting the “back” part of the loop. The device simply acts.

Feels obvious, right?

That’s why this shift is so big.

The Frameworks That Make All of This Possible

Now we’re getting into the real machinery the platforms quietly powering this jump. These frameworks are built to make local AI not just possible, but efficient on small chips with tight power budgets.

Here are the ones shaping 2025–2026.

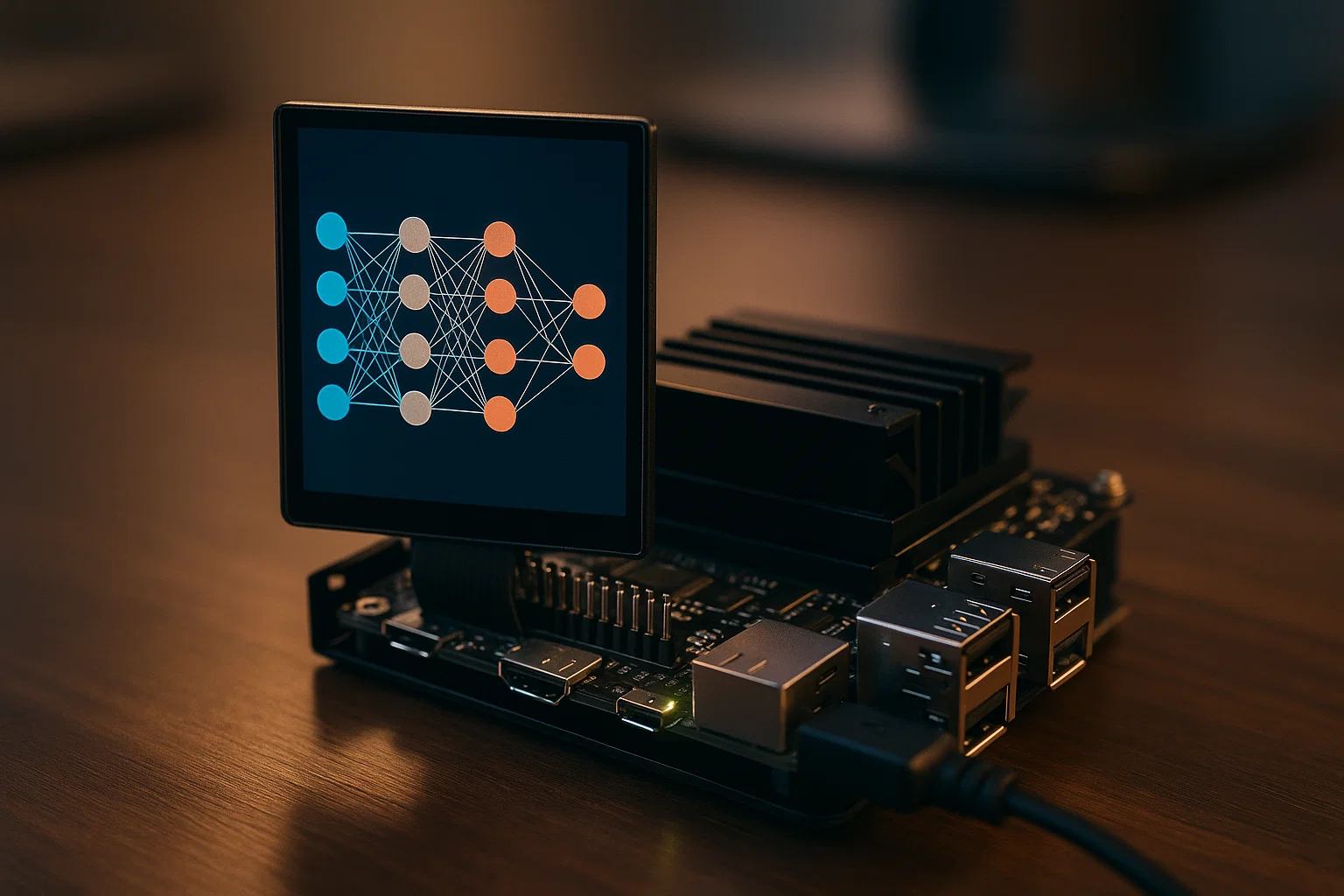

TensorFlow Lite (TFLite) The Lightweight Workhorse

Google designed TFLite to shrink big neural networks down into something your phone can handle without melting. It works especially well for:

- Image classification

- Gesture recognition

- On-device speech

- Object detection

What stands out is how aggressively it uses quantization. It can compress a model from float32 to int8 and barely lose accuracy. That’s how your camera app detects faces so quickly without touching the cloud.

One developer told me he shaved inference time on a Raspberry Pi from 320 ms to 85 ms just by switching to a TFLite int8 version of his model. That’s the kind of gap you can feel as a user.

Core ML Apple’s Quiet Powerhouse

Apple doesn’t brag about everything, but one thing they consistently nail is on-device ML performance. Their Neural Engine can run billions of operations per second while sipping power like it’s nothing.

Core ML frameworks give your iPhone the ability to run:

- Offline text recognition

- Smart camera enhancements

- Real-time AR

- Fitness and health insights

It’s personal, private, and remarkably fast.

If you’ve ever used offline Siri commands or tried Live Photos where motion gets stabilized instantly, that’s Core ML doing its thing.

Qualcomm AI Engine & SNPE Speed on a Chip

On Android hardware, Qualcomm rules this world. Their AI Engine and SNPE framework are designed to take advantage of:

- Hexagon DSPs

- Adreno GPUs

- Custom NPU blocks

The Snapdragon X Elite series from 2025 now supports quantized and sparse models running nearly 38% faster than the 2023 generation based on Qualcomm’s own benchmarks.

This is why mid-range Android phones are starting to run tasks that once required a cloud connection.

NVIDIA TensorRT and Jetson Frameworks The Muscle for Robotics

When you move into robotics, industrial automation, and drones, NVIDIA owns this space. Jetson devices like the Orin Nano and Xavier NX can run serious models locally:

- YOLO-based object detection

- SLAM mapping

- Pose estimation

- Predictive maintenance algorithms

TensorRT is the secret sauce here. It squeezes every ounce of compute out of GPU cores by optimizing computation graphs and fusing operations.

I saw a demo where a Jetson Xavier performed real-time fruit sorting on a farm conveyor belt offline. No cloud. Just pure local AI. The speed was shocking.

TinyML Frameworks ML That Fits on a Fingernail

This is where things get weird in the best way. We’re talking models that run on microcontrollers with:

- 256 KB RAM

- 1–10 mW power consumption

Frameworks like:

- TensorFlow Lite Micro

- Edge Impulse

- CMSIS-NN

- microTVM

TinyML makes it possible to put intelligence into sensors:

- Soil monitors

- Wearable patches

- Smart tags

- Battery-less devices

Imagine a health sensor the size of a postage stamp running an AI model while powered by body heat. That’s not science fiction anymore.

What Makes On-Device AI So Much Faster?

Speed isn’t just about raw compute. It’s about where the compute happens.

Here’s the usual cloud path:

Device → Uplink → Server → Inference → Downlink → Device

Even if everything goes perfectly, you get latency. And if your connection dips, you feel it instantly.

On-device inference looks like:

Device → Inference → Result

That’s it.

No waiting.

No bouncing between continents.

It reminds me of something someone said at a conference last year. A researcher walked on stage holding a tiny microcontroller and said, “This little board can outpace the cloud when the cloud is 200 ms away.” People laughed for a second, then realized he wasn’t joking.

When you cut out the network, the device becomes the fastest server you own.

Examples of Edge AI Devices You Probably Use Already

You may not think of these as “AI devices,” but they are quietly running hundreds or thousands of micro-inferences a day.

Smartphones

Face unlock, battery optimization, noise reduction, offline translation.

Smartwatches

Heart rate analysis, fall detection, fitness pattern modeling.

Earbuds

Adaptive noise canceling, ambient mode recognition.

Home Assistants

Wake-word detection running locally before cloud requests.

Cameras

Object tracking, motion detection, low-light enhancement.

Cars

Driver monitoring, lane deviation warnings, pedestrian detection.

Drones

Obstacle avoidance, path planning.

Industrial Sensors

Predictive maintenance, anomaly detection.

It’s wild how normal this all feels now. A few years ago, half of these tasks required a data center.

Which AI Models Do Edge Devices Use?

Not all models make sense on the edge. Some are too heavy. Some don’t benefit from local inference. But several categories fit perfectly:

- Lightweight CNNs

- Distilled transformers

- Quantized classification models

- Small language models (2B–8B range, optimized)

- Audio recognition models

- Sensor fusion models

- Gesture and intent models

The trick is balancing accuracy with energy. A model that drains half your battery after three inferences isn’t practical.

Model compression techniques like:

- Quantization

- Pruning

- Distillation

- Weight sharing

- Operator fusion

…turn massive cloud architectures into pocket-friendly AI.

A small detail most people don’t realize:

An optimized edge model doesn’t just run faster it often becomes more stable too, because it’s designed to make decisions quickly without perfect data.

The Human Side of On-Device AI: Why It Matters Emotionally

Speed is great. Privacy is great. But the real connection happens in tiny moments, like when your device understands your voice over the sound of rushing wind during a morning walk. Or when your earbuds know when you’re talking and let ambient sound through without you flipping a switch.

These micro-moments add up. They feel almost like your devices are learning alongside you. Not in a creepy way just attentive, helpful, quiet.

I’ve tested plenty of edge devices that fail to understand context. You tap, swipe, wait, and it still misfires. But the ones that get it right? Those are the ones that feel like the future.

You’re not waiting for a server to notice you.

The intelligence is already here, right next to you.

What Edge-AI Frameworks Still Struggle With

It’s not all polished tech magic. Some issues still exist, and they’re worth naming:

Power constraints

Running a model constantly can warm a device or drain the battery.

Memory limits

Even with compression, some models just won’t fit on small chips.

Update complexity

Updating a cloud model is simple. Updating millions of devices? Much harder.

Hardware fragmentation

Android devices have wildly different NPUs, DSPs, and GPUs.

Security of local models

Keeping everything on-device is more private, but the model itself can be reverse-engineered if not protected.

Still, the trajectory is clear. Hardware is evolving fast. And the smarter devices get, the fewer trips they make to the cloud.

What’s Coming Next: The Near-Future of Edge Intelligence

We’re heading toward a world where:

1. Small Language Models Become Native

Phones running 3B–7B models offline, enabling:

- Offline chat

- Real-time summarization

- Private assistants

It’s already starting.

2. Multimodal Edge AI

Phones understanding:

- Images

- Audio

- Touch

- Motion

- Context

…and blending them together instantly.

Imagine your AR glasses noticing what you’re looking at and giving suggestions without needing a network.

3. Sensor Networks That Think

Smart homes where each sensor runs its own inference rather than pinging a hub.

4. Wearables That Predict Before You Ask

Not just tracking steps but noticing early fatigue, posture shifts, or emotional patterns.

5. Batteryless AI Sensors

Energy-harvesting devices with tiny models that require microwatts.

A researcher once joked, “AI will outlive batteries before batteries outlive AI.” He might actually be right.

6. Real-Time Privacy

Since nothing leaves the device, privacy becomes a default, not a feature.

This changes the relationship people have with technology. You trust what you can see and people can see how edge inference avoids the cloud entirely.

Where All of This Leaves Us

Edge-AI on-device inference frameworks aren’t just a technical breakthrough. They’re a shift in how technology feels. Faster, more personal, less dependent. A little more like a companion and less like an interface.

And maybe that’s the part worth sitting with for a moment.

When intelligence moves closer to where life actually happens, devices stop feeling like tools and start feeling like extensions of your own intuition.

The main keyword deserves one final mention:

The world of edge-AI on-device inference frameworks is only going to grow from here smaller models, smarter chips, more natural interactions.

If you’re building the future, this is the layer worth paying attention to.

And if you’re simply living in it, you’re already seeing the benefits every time your devices quietly respond without asking for permission from the cloud.

Ethan Cole is an AI and emerging tech analyst who explains future tools, wearables, and automation in simple terms. He brings curiosity and clarity together, helping readers understand what’s coming next without feeling overwhelmed.